GeCoLa

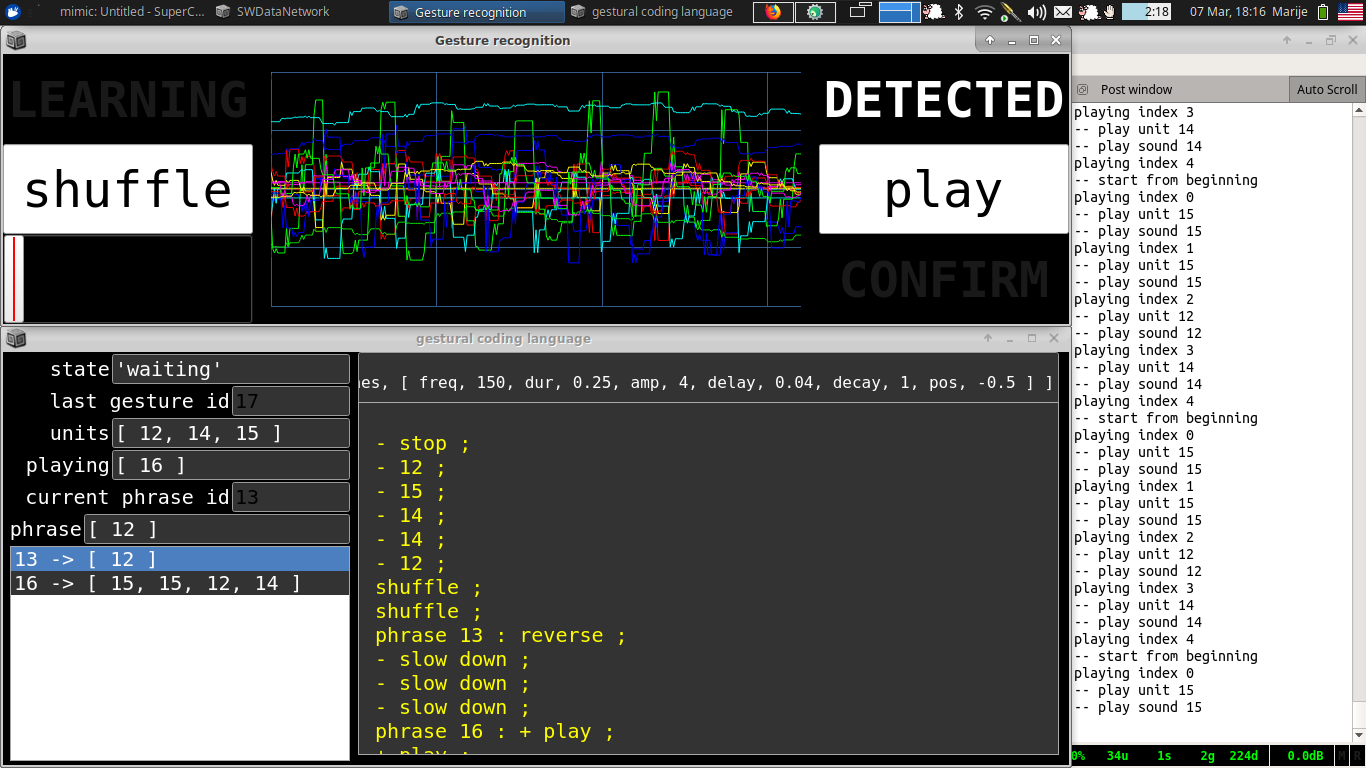

GeCoLa or GEstural COding LAnguage is a project that looks at how a coding language written by gestures could function.

The programming language is a minimal language to create sequences of sounds and manipulate these. All the keywords in the language and the variable names are expressed by gestures.

The language has two types of objects:

- units, which are generating a sound

- phrases, a sequence of units and/or phrases

An object can be declared by making the gesture for new and the gesture for either unit or phrase. The system then guides the performer to train a new gesture, which will be the ‘name’ of the variable.

Whenever the gesture of a variable is performed, the system will play back the sound or sequence connected to that variable once.

On the phrase object the performer can do various manipulations, either adding (plus) or substracting (minus) something:

- add/substract a unit or phrase (some measures are taken to avoid recursion)

- add/substract speed (how quickly the sounds are played after each other)

- add/substract players - when a player is added, it will play the phrase repeatedly, until the player is removed again.

- add/substract space - this was a choreographical concept, which still needs to be translated to something sonically, this could relate to a reverberation effect on the sound

Then a phrase can also be copied with the copy operator. After that, the performer is guided again to train the system with a new gesture which will be the variable name for that copy. Afterwards the copy can be manipulation so a variation of the original phrase is made.

Finally, the order of units/phrases in a phrase can also be reversed or shuffled (put in a random order).

Performance the machine is learning.

Machine learning is hailed as both the solution to our current day problems, as well as one of the most threatening things to life as we know it. The performance the machine is learning is a theatrical performance highlighting the process of training a machine with realtime gestures: the labour that is absent from most dialogues on machine learning.

This performance evolved out of the development of GeCoLa during the MIMIC residency at Sussex University.

Credits

Thanks to Tamar Clarke Brown, Jana Bitterová, and Joana Chicau for the input during the CCL-AMS19 for the movement gestures - and to all the other CCL participants for inspiration and conversations!

The Gesture Recognition Toolkit by Nick Gillian was used for detecting the gestures.